The flat screen had an extraordinary run. Forty years of personal computing, smartphones, tablets, and monitors, and the dominant interface paradigm remained fundamentally the same: a two-dimensional rectangle of light that you look at rather than through or into. The reasons for this longevity were practical — flat screens are cheap, reliable, and good enough — but the limitations were always apparent. All the information in the world was available, but it was behind glass, in a frame, separated from the physical space you actually inhabited.

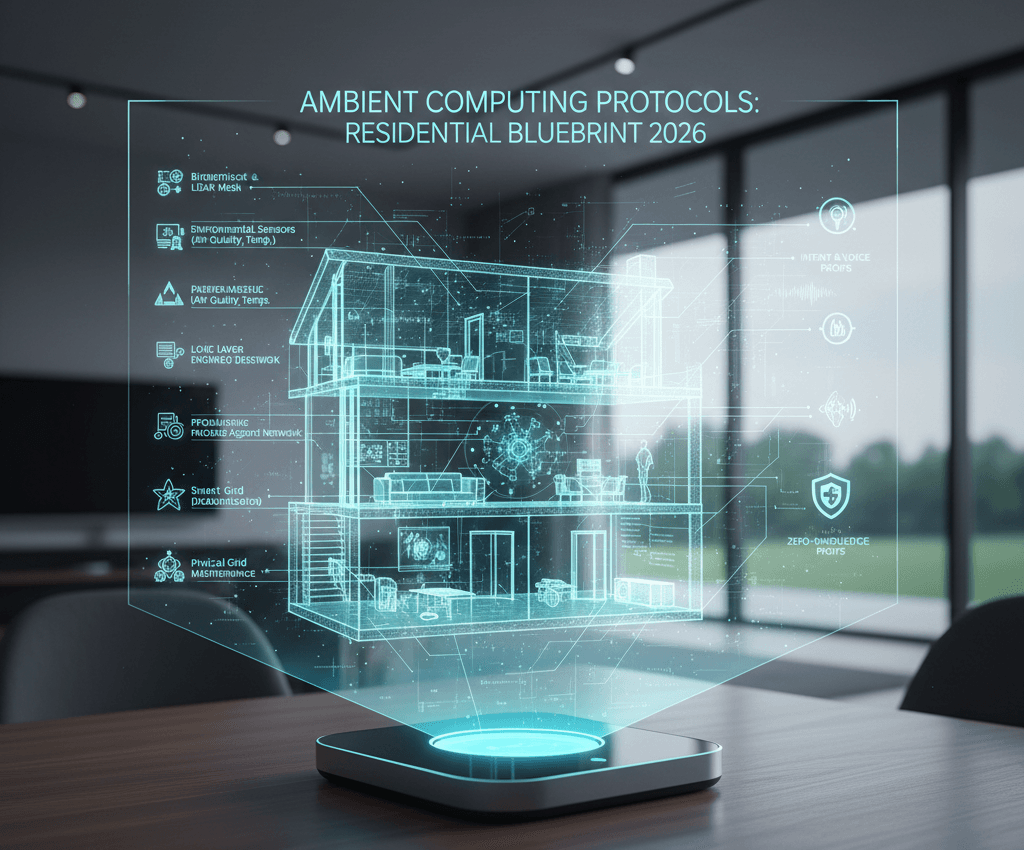

Holographic edge intelligence systems represent one of the most ambitious attempts to change this relationship. The “holographic” element here doesn’t require the science-fiction hologram that appears as a fully three-dimensional object floating unsupported in space — that physics is genuinely difficult. What it does describe is a category of display and projection technology that embeds information into real physical space in a way that overlays, extends, or replaces the traditional screen paradigm, and the “edge intelligence” element describes the computational architecture that makes this possible without intolerable latency.

The Technology Landscape

The current state of holographic display technology is an honest mix of genuine progress and ongoing limitation. Microsoft’s HoloLens, Magic Leap, and most recently Apple’s Vision Pro represent the high end of mixed reality devices — wearable systems that overlay high-resolution digital content onto physical space with sufficient precision to create a convincing sense of co-presence. The display quality, particularly in Apple’s system, is genuinely remarkable. The form factor, weight, and battery life constraints mean that none of these are yet products people wear throughout a working day.

Projection-based holographic displays — systems that create floating or seemingly three-dimensional images in space without a wearable — are more limited. Light-field displays, volumetric displays using spinning diffuse materials, and persistence-of-vision systems all exist and have specific applications, but they require controlled environments, specific viewing angles, or physical enclosures that limit their practical deployment.

Where Edge Intelligence Enters

The latency requirement for credible holographic AR is extremely demanding. For the digital overlay to appear anchored to a real object — stable, not swimming or lagging as your head moves — the system needs to process head position, recalculate the 3D rendering, and update the display in well under ten milliseconds. Any latency above this threshold breaks the perceptual illusion and causes the nausea-inducing swimming effect that has plagued AR development for years.

Cloud-based processing cannot meet this latency requirement. The round-trip time to a data centre and back, even on a fast connection, is too slow. This is where edge intelligence — the deployment of significant computational resources within the device itself or in immediately local infrastructure — becomes essential. The processing has to happen close to the display, in milliseconds, with no tolerance for network variability.

Recent advances in neuromorphic chips — processors designed to mimic neural architecture and perform certain inference tasks with extreme efficiency — are one pathway toward the edge processing requirement. Apple’s M-series processors, with their unified memory architecture, represent another. The computational gap between what’s needed for compelling holographic AR and what’s available in wearable form factor is closing, but it hasn’t yet closed.

Industrial and Professional Applications Coming First

The consumer market for holographic display systems will lag behind industrial and professional adoption, as it has for most AR technology. Surgery, where a holographic overlay of a patient’s imaging data precisely positioned over the surgical site can reduce error and procedure time, is an active and well-funded application. Manufacturing assembly guidance, where workers see step-by-step instructions superimposed on the actual component they’re working on, is in deployed use. Architectural visualisation, military situational awareness displays, and remote collaboration tools — where two people in different locations interact with the same holographic object — are all areas where the technology has practical value that justifies current limitations.

The consumer path is longer but visible. As processing power increases and form factor constraints ease, the glasses that overlay information on your physical world rather than requiring you to look at a screen will become more plausible. When they arrive — not if — the implications for how people interact with information, space, and each other will be substantial enough to warrant the significant investment and research attention currently flowing into the field.